Data Permissions vs Knowledge Governance

There’s a line I keep hearing about how Microsoft Copilot respects your document permissions.

That’s true. It’s also almost entirely beside the point.

Permissions answer one question. Regulated environments need it to answer a different one. Combining the two is how organisations end up with a compliance exposure they didn’t see coming.

What permissions actually control

Copilot does respect Entra identity-based permissions. If a user doesn’t have access to a SharePoint site, content from that site won’t surface in their results. This is real, it works, and it’s important for information security.

But what it answers is: who can see this content?

That is not the same question as: should this content be trusted?

Microsoft knows identity. It knows which user is asking and which content they’re allowed to see. What it doesn’t know, and was never designed to know, is whether any given piece of content is approved, current, or authoritative.

Those are different questions. In a regulated environment, the second one is the one that matters the most.

What governance actually means

In any environment where accuracy carries consequences, governance means being able to answer a specific set of questions about a specific piece of content:

- Is this version approved?

- Who owns it?

- When was it last reviewed or changed?

- If this procedure and that document say different things, which one is right?

A real knowledge management system built for a regulated contact centre, like a healthcare provider, or a financial services organisation’ can answer all of those questions. It has approval workflows, content ownership, version control, and review cycles baked into the content lifecycle.

None of that transfers when content is indexed into Microsoft Graph.

The index has no concept of approved status. It has no mechanism for recording who owns a piece of content or when a human with actual accountability last reviewed it.

The governance metadata that makes content trustworthy doesn’t travel with the content into the index.

What happens in the index

When content is indexed into Graph for Copilot, it sits alongside everything else that’s been indexed:

- SharePoint pages

- Teams chat history

- Documents someone created this morning

- Meeting transcripts

- Standard Operating Procedures (SOPs) that were retired six months ago but never deleted.

The relevance model doesn’t know what’s right. It knows what matches. Cosine similarity measures semantic proximity. Think of it as a “sounds like” score, not a “should be trusted” score. That’s it’s whole job. It doesn’t check authority. It doesn’t check freshness. It will surface a draft someone left in a shared drive just as happily as a procedure your compliance team signed off.

It gets more specific, and more problematic than that.

Configure five knowledge sources in Copilot Studio and one will be silently dropped. The system only takes the top four. Microsoft’s documentation does not tell you which source loses.

Relevancy is also personal. Copilot factors in people insights, your Microsoft 365 network, your collaboration history. Two agents, same contact centre, same question. Potentially different answers, because their social graphs don’t match.

That is not acceptable anywhere that requires consistent answers.

In a regulated environment, every agent needs the same authoritative response to the same question. A relevance model that varies by social graph cannot guarantee that.

The question no one can answer

Here’s a question worth putting to anyone selling Graph indexing as a knowledge governance solution:

When the graph index blends content from different sources that say conflicting things, what determines which one grounds the response?

Microsoft’s documentation doesn’t answer this, because there is no documented arbitration mechanism. Retrieval happens from one data source at a time. The large language model then synthesises across sources. There’s no published mechanism for source priority, authority weighting, or conflict resolution between knowledge systems.

For a general enterprise search use case, that’s an acceptable trade-off. You’re looking for information, not rendering a compliance decision.

For a regulated contact centre where a customer interaction carries legal and regulatory weight, it’s an audit nightmare.

If a regulator asks you to produce the exact content that grounded a specific customer interaction, you need to be able to answer that question cleanly. Graph indexing does not support a clean answer.

This isn’t a Microsoft problem

It isn’t even a tool problem. These systems do exactly what they were built to do.

The problem is what you’re pointing them at.

Salesforce Data Cloud is explicitly designed to blend data from multiple sources, that’s its value proposition. Approved procedures sit alongside CRM interaction history, user notes, customer sentiment data, and marketing attributes. Governed knowledge and ungoverned data, combined in a single index.

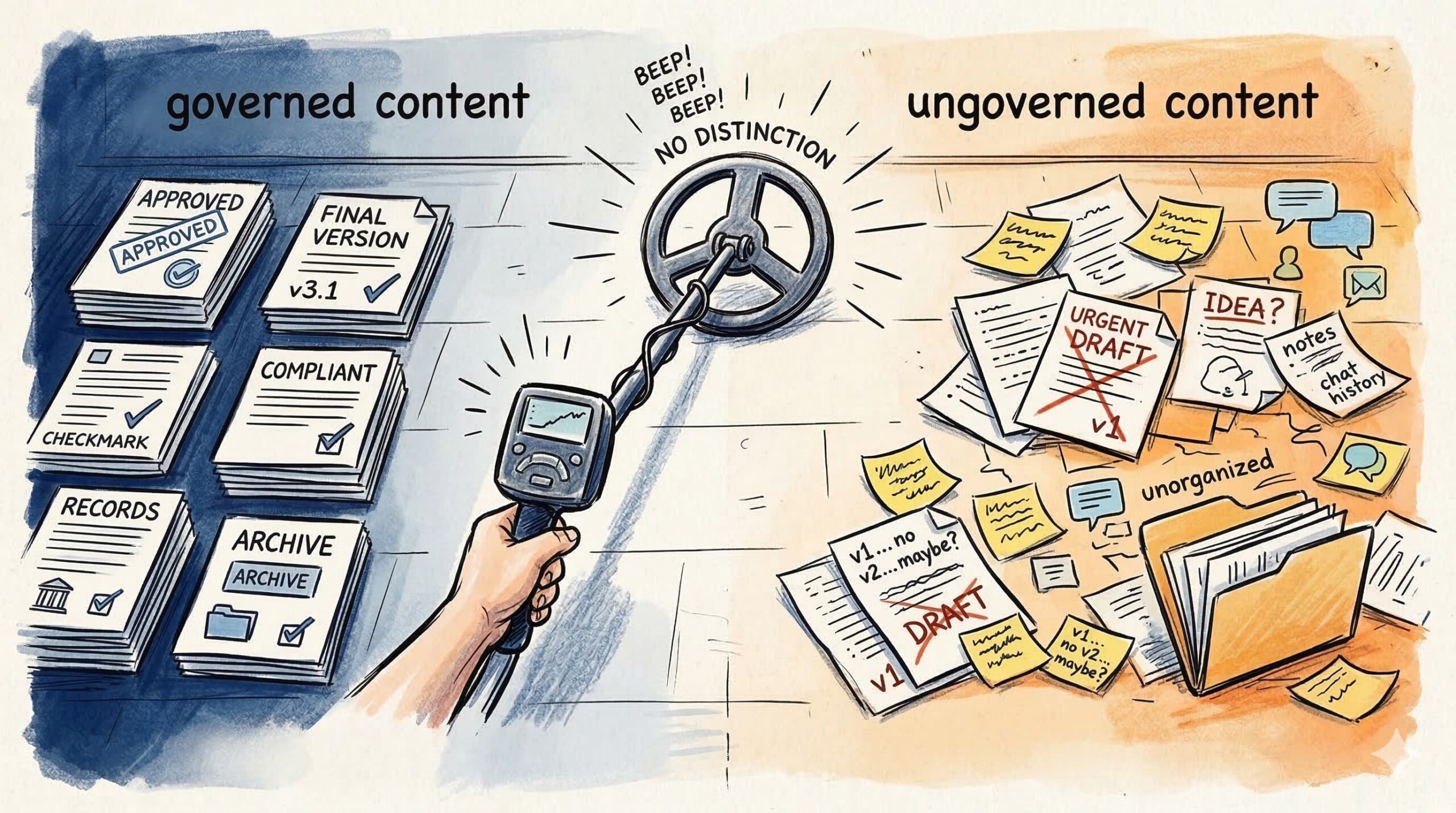

Glean indexes everything without distinction. Which works well until some of that content is approved and some isn’t.

Elasticsearch, any vector store, any general-purpose semantic index, exactly the same outcome. Not because they’re broken. Because they were built to find relevant content, not authoritative content.

The moment approved procedures leave a governed system and enter a shared index alongside uncontrolled content, you lose five things:

- Version authority

- Approval status

- Content ownership

- Content conflict resolution

- Retrieval audit trail

The index doesn’t care that your knowledge content was authored, reviewed, and approved through a documented governance process. It cares that the semantic content is relevant to the query. That’s what it was built to do.

Where the risk actually lives

To be clear: this isn’t AI scepticism. The productivity case for Copilot and tools like it is real.

I use Copilot. It’s genuinely useful. It saves me from endless searching through emails, Teams chats, meeting transcriptions, and channel threads. That’s exactly what it was designed for, helping knowledge workers find things faster across the content they generate every day.

None of what I’m searching for is governed. None of it is compliance-driven. It doesn’t matter if Copilot surfaces an email I sent last week or a draft someone never finished. The stakes are low. Discovery is it’s only job.

That’s not the contact centre use case.

Contact centre knowledge is different by design. It’s authored, reviewed, approved, and version-controlled for a reason. When an agent gives a customer an answer, that answer has to be the right one, not the most semantically relevant one, not the one that matches their social graph, the authoritative one.

The problem is architectural. General-purpose enterprise AI was built for discovery. It was not built for environments where the provenance of an answer matters after the fact. That’s a different job entirely.

When organisations use discovery infrastructure to do governance work, they create a gap between what they believe their AI is doing and what it’s actually capable of.

The permissions are respected. The governance is not.

The question the industry should be asking isn’t “can we use AI on our knowledge?”

It’s “does our AI know the difference between what’s approved and what someone drafted yesterday?”

Most enterprise index architectures cannot answer that question. And that’s where the risk actually lives.

Are you drawing this distinction in your organisation? I’m particularly interested in where teams in regulated environments are landing. It a conversation I keep having, and the answers vary more than you’d expect.

One Comment